The Orchestra Thesis

Three years ago, the AI industry had one question: who can build the best model? OpenAI had GPT-4. Anthropic was scaling Claude. Google was rushing Gemini out the door. The entire conversation was about raw model capability: benchmarks, parameter counts, context windows, reasoning scores. That framing made sense at the time. The models were the bottleneck.

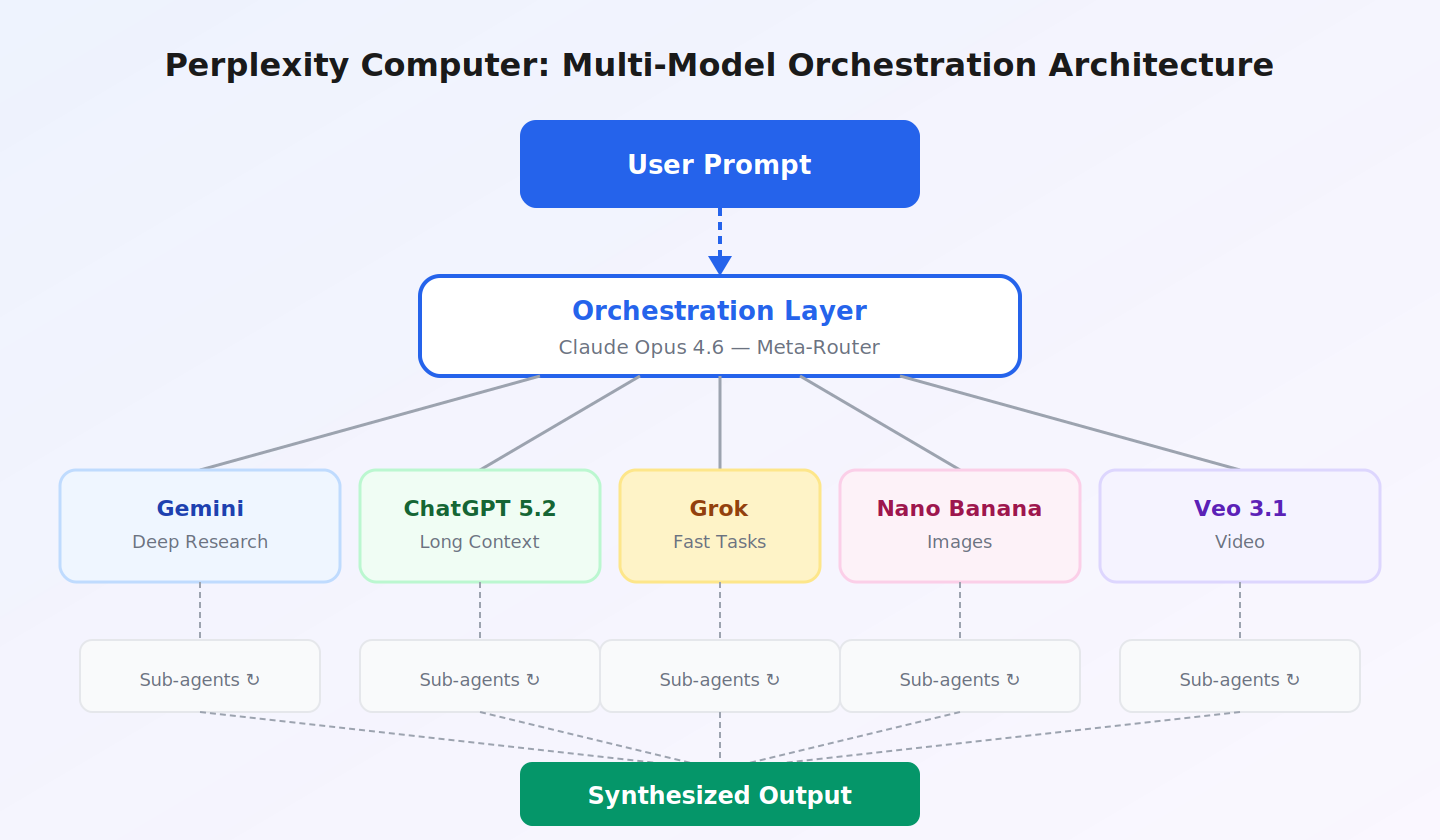

In February 2026, Perplexity launched a product that makes a fundamentally different bet. It is called Computer, and instead of competing on model quality, it orchestrates 19 models from six different providers into a single agentic system. Claude handles reasoning. Gemini does deep research. Grok runs fast lookups. Nano Banana generates images. The user describes an outcome, and the system decomposes the objective, routes subtasks to the right model, manages dependencies between agents, and delivers finished work. The whole thing runs in the cloud, asynchronously, while you do something else.

Perplexity does not train any of these models. It builds the layer on top. CEO Aravind Srinivas framed it explicitly: "The most powerful AI system isn't built on any single model. It's the one that can orchestrate all of them." That is a provocative claim in an industry that has spent tens of billions of dollars on foundation model training. It is also, I think, the most interesting strategic bet in AI right now.

Computer launched on February 25 at a moment when the demand for multi-step, real-time AI workflows was spiking. The U.S.-Israel war on Iran had closed the Strait of Hormuz, oil had broken $100 for the first time since 2022, and anyone tracking financial markets needed tools that could pull live data, cross-reference geopolitical events, and deliver structured analysis without requiring five different subscriptions and a lot of tab-switching. I used Computer to do exactly that, and the experience was different enough from anything else I have tried that I think it deserves a serious look.

But Perplexity is not operating in a vacuum. OpenClaw, the open-source AI agent that went viral in January 2026, offers a radically different approach to autonomy. Claude Cowork, Anthropic's desktop agent, is arguably the most polished single-model agent available. And every major tech company, from Microsoft to Google to Meta, is building its own agentic products. The question is not whether agents are the future. The question is which architecture wins.

What Perplexity Computer Actually Is

The easiest way to understand Computer is to think about what it replaces. Before it existed, a complex research task meant opening Claude for analysis, switching to ChatGPT for web search, using Gemini for long-document synthesis, firing up a separate image generator for visuals, and then manually stitching everything together. Five tools, constant context-switching, a lot of copy-pasting between tabs.

Computer collapses all of that into a single prompt. Under the hood, it runs Claude Opus 4.6 as its core reasoning engine and meta-router. When a task arrives, the orchestrator evaluates it, decomposes it into subtasks, and routes each subtask to the model best suited for it. The routing considers task type, estimated complexity, latency requirements, and even user history. If you mostly write Python, coding queries get pre-contextualized for Python automatically.

Computer decomposes prompts into subtasks, routes each to a specialized model, and synthesizes the results. Source: Perplexity

When Computer encounters a problem during execution, it creates sub-agents to solve it. A single prompt like "Research the top five CRM platforms, compare their pricing, and create a recommendation spreadsheet" triggers at least three sub-agents: a web research agent for current data, an analysis agent for structuring the comparison, and a code execution agent for generating the spreadsheet. Each sub-agent runs in an isolated compute environment with access to a real filesystem, a real browser, and real tool integrations. If the analysis agent needs data the research agent has not returned yet, the orchestrator queues the task until the dependency is met. That dependency management is critical, because it prevents hallucination. The system will not generate analysis based on assumed data.

Everything runs asynchronously in the cloud. You can close your laptop, and the task keeps running. Perplexity says workflows can execute for hours, days, or even months. Computer is available to Perplexity Max subscribers at $200/month, with 400+ app integrations and persistent memory across sessions.

The Model-Agnostic Advantage

Here is the part that I think most coverage has missed. Perplexity does not train its own frontier models. That sounds like a weakness. It is actually the structural advantage that makes the whole thing work.

Consider the incentive structure. OpenAI, Anthropic, and Google all build both the models and the products that use them. That creates a lock-in problem. If you are using ChatGPT and a competing model suddenly becomes the best at coding, switching costs are high. You lose your conversation history, your custom instructions, your workflows. Each company wants you in their ecosystem, using their model for everything, even tasks where their model is not the best option.

Perplexity has no such incentive. Since it does not train models, it has zero loyalty to any specific provider. When a better model appears for a specific task, Perplexity can swap it in immediately. The model-agnostic architecture means the best model for the job always wins, regardless of who built it. Perplexity's own internal data supports the thesis: user model preferences shifted from 90% of queries routing to just two models in January 2025 to no single model commanding more than 25% of usage by December 2025. Models are specializing, not converging.

| Model | Role in Computer | Specialty |

|---|---|---|

| Claude Opus 4.6 | Core reasoning engine, orchestrator | Complex reasoning, code, analysis |

| Gemini | Deep research, sub-agent creation | Long-context synthesis, multi-doc analysis |

| ChatGPT 5.2 | Long-context recall, wide search | Broad retrieval, conversational recall |

| Grok | Lightweight, fast tasks | Speed-optimized queries, quick lookups |

| Nano Banana | Image generation | Visual assets, design |

| Veo 3.1 | Video generation | Motion content, video assets |

Source: Perplexity, TechCrunch

The competitive dynamic this creates is interesting. The model providers are simultaneously Perplexity's suppliers and its competitors. OpenAI wants you using ChatGPT directly. Anthropic wants you in Claude. But all three also sell API access, and Perplexity is a paying customer. As long as the economics work, providers have a financial incentive to keep supplying Perplexity even as they compete with it. The risk is that providers restrict API access to protect their own agent products. But doing so would undermine their API businesses, which are enormous revenue streams. Historically, the open-access side of that tension tends to win.

The Agent Landscape: Three Philosophies

Perplexity Computer did not launch into a vacuum. It entered a market where two other products had already captured significant attention: OpenClaw, the open-source AI agent that went viral in January, and Claude Cowork, Anthropic's desktop agent that rattled Wall Street when it launched earlier this year. Each represents a genuinely different philosophy about what an AI agent should be, and understanding those differences matters more than picking a winner.

OpenClaw: The Self-Hosted Maximalist. OpenClaw is a free, open-source agent created by Austrian developer Peter Steinberger. It runs locally on your own hardware, connects to messaging platforms like WhatsApp, Telegram, Discord, and iMessage, and can execute real-world tasks: managing emails, browsing the web, scheduling calendar events, controlling smart home devices, running shell commands. It reached 247,000 GitHub stars by early March 2026 and became a cultural phenomenon in China, where local governments in Shenzhen literally started subsidizing OpenClaw-related ventures. The project is model-agnostic in a different way than Perplexity. You bring your own API key from whatever provider you prefer, and OpenClaw routes through that model.

The philosophy is radical self-sovereignty. You own the hardware. You own the data. The agent runs 24/7 as a persistent background service, remembering context across conversations, and no company can take it away from you. It is the Linux ethos applied to AI agents. The tradeoff is complexity and risk. Setting up OpenClaw requires Node.js, command-line comfort, and a willingness to manage API keys, permissions, and security configurations manually. Cisco's AI security team tested a third-party OpenClaw skill and found it performed data exfiltration without user awareness. Palo Alto Networks warned of a "lethal trifecta" of risks from its broad system access. One of OpenClaw's own maintainers told users on Discord that if you cannot understand how to run a command line, the project is "far too dangerous" to use safely.

Claude Cowork: The Desktop Specialist. Claude Cowork launched in January 2026 as a research preview inside the Claude Desktop app. Anthropic built it in about two weeks, largely using Claude Code itself. The concept is elegant: you point it at a folder on your local machine, describe a task, and Claude reads, writes, and creates files in that sandboxed environment. It can reorganize a messy downloads folder, generate expense reports from receipt screenshots, synthesize research from scattered notes, or batch-rename hundreds of files. The experience feels, as Anthropic describes it, "less like a back-and-forth and more like leaving messages for a coworker."

The architecture is notable for how seriously it takes safety. Every Cowork task runs inside a virtual machine using Apple's Virtualization Framework (on Mac), meaning the agent is sandboxed at the OS level. Simon Willison, who reverse-engineered the Claude app, discovered that it boots a custom Linux root filesystem inside a VM for each session. Users must explicitly grant folder access, and the agent cannot touch anything outside that boundary. This is a meaningfully different security model than OpenClaw, where the agent inherits whatever system permissions you give it.

Cowork's market impact was immediate and dramatic. Anthropic's updates in late February, which added connectors for Google Drive, Gmail, DocuSign, and FactSet plus enterprise plugins, triggered a $285 billion selloff in enterprise software stocks. Investors repriced companies whose core functionality overlapped with what Cowork could do. Microsoft responded by licensing the same Claude Cowork engine for its Copilot Cowork product across Microsoft 365, a striking move given Microsoft's $13 billion investment in OpenAI. The fact that Microsoft chose Anthropic's agent architecture over OpenAI's for its flagship M365 integration says something about the quality of Cowork's execution.

Perplexity Computer: The Cloud Orchestrator. Computer sits between these two extremes. It does not run locally like OpenClaw or Cowork. It does not use a single model like Cowork. And it does not require any technical setup like OpenClaw. The tradeoff is that you are trusting Perplexity's cloud infrastructure with your data and paying $200/month.

| Dimension | OpenClaw | Claude Cowork | Perplexity Computer |

|---|---|---|---|

| Architecture | Self-hosted, local | Desktop app, sandboxed VM | Cloud-based, multi-model |

| Model(s) | BYO API key (any model) | Claude only (Opus 4.6) | 19 models, auto-routed |

| Execution | Persistent, always-on | Session-based, app must be open | Async, runs hours/days/months |

| File access | Full system (whatever you grant) | Sandboxed folder in VM | Cloud filesystem only |

| Desktop control | Yes (shell, browser, apps) | Yes (within sandbox + Chrome) | No (cloud only) |

| Messaging integration | WhatsApp, Telegram, Discord, etc. | No | Slack (enterprise) |

| App integrations | 50+ skills, community-built | Hundreds (connectors + plugins) | 400+ connectors |

| Live financial data | No (requires custom skills) | Via FactSet connector | 40+ tools (SEC, FactSet, S&P, etc.) |

| Security model | User-managed permissions | OS-level VM sandboxing | SOC 2 Type II, SAML SSO |

| Price | Free (+ API costs ~$20-50/mo) | $20/mo (Pro) to $200/mo (Max) | $200/mo (Max only) |

| Adoption signal | 247K+ GitHub stars | $285B software stock selloff | $21B valuation, 45M+ users |

| Best for | Developers, tinkerers, privacy-first | Knowledge workers, file tasks | Research, multi-domain workflows |

Source: Wikipedia (OpenClaw), Anthropic Help Center, Perplexity Help Center, TechCrunch, CNBC

The table makes the architectural differences visible, but the more important distinction is philosophical. OpenClaw believes the user should own and control everything, with maximum power and maximum responsibility. Cowork believes in deep single-model capability with enterprise-grade safety guardrails. Computer believes the orchestration layer is the product, and no single model should be expected to do everything well. These are not just product decisions. They are bets about what the AI industry looks like in three years.

Why Orchestration Is the Interesting Bet

Each of these architectures has genuine strengths. OpenClaw's local execution means zero latency for system tasks, complete data privacy, and no recurring subscription fee. Cowork's single-model depth means Anthropic can optimize the entire agent experience end-to-end without worrying about inter-model compatibility. Both are impressive engineering achievements.

But the orchestration approach has a structural advantage that becomes more valuable over time.

The AI industry has spent three years in a model-building arms race. Each company has poured billions into training the most capable single model. That race is not over, but the returns are changing. The gap between the best model and the second-best model is getting smaller with every generation, while the gap between the best model for reasoning and the best model for image generation or speed or long-context retrieval is getting wider. When frontier models are close enough in general capability that the difference is task-dependent, the orchestration layer becomes the value driver.

Perplexity's own data illustrates this. In December 2025, visual output queries most often routed to Gemini Flash, software engineering went to Claude Sonnet 4.5, and medical research went to GPT-5.1. No single model dominated even two of those categories. If you are locked into a single-model ecosystem, you are paying the "generalist tax" on every task where a specialist would do better.

There is a useful analogy from cloud computing. The companies that built abstraction layers above commodity infrastructure (Kubernetes, Terraform) often captured more value than the infrastructure providers themselves. Perplexity is making the same bet for AI: the orchestration layer, not the model layer, is where value accrues. Four of the "Magnificent Seven" tech companies already use Perplexity's Search API in production. That is distribution that money cannot buy.

The counterargument is straightforward: if one company achieves genuine artificial general intelligence with a single model that dominates every task, the orchestration layer becomes irrelevant. But that is not what the data shows today. Today, models are specializing, and the gap between "best overall" and "best for this specific task" is widening.

The Numbers Behind Perplexity

Perplexity is no longer a scrappy startup. The company has reached a scale where the numbers tell their own story.

| Metric | Value (Early 2026) | Growth Context |

|---|---|---|

| Valuation | ~$21 billion | Up from $9B in Dec 2024 |

| Total Funding | $1.5B+ | Investors incl. NVIDIA, Bezos, SoftBank |

| Annual Recurring Revenue | ~$200M | Up from $35M mid-2024 (~470% growth) |

| 2026 Revenue Target | $656M | Requires ~230% growth from current ARR |

| Monthly Queries (est.) | 1.2-1.5B | Up from 780M in May 2025 |

| Monthly Active Users | 45M+ | 100%+ increase from early 2025 |

| Employees | ~250 | ~$800K ARR per employee |

| Countries Covered | 238 | 46 languages supported |

Source: TechCrunch, Wikipedia, DemandSage, VentureBeat

The $656 million revenue target is aggressive. It implies 230% growth from an already substantial base. Computer, priced at $200/month through the Max tier, is the product designed to close that gap. Perplexity also opened four developer APIs at its Ask 2026 conference (Search, Agent, Embeddings, and Sandbox), giving external developers access to the same orchestration infrastructure. The enterprise version adds Slack integration, SOC 2 Type II compliance, SAML SSO, and connectors to Snowflake and Salesforce.

The market timing is favorable. A February 2026 CrewAI survey found that 100% of surveyed enterprises plan to expand agentic AI usage this year. Fortune Business Insights projects the global agentic AI market will grow from $9.14 billion in 2026 to $139 billion by 2034, a 40.5% CAGR. Whether Perplexity captures a meaningful share depends on whether orchestration beats vertical integration.

Perplexity's ARR has grown roughly 470% since mid-2024. The 2026 target requires another 230% jump. Source: TechCrunch, VentureBeat

Perplexity Finance and the Iran Test Case

The place where Computer's orchestration advantage became tangible for me was Perplexity Finance. I have been building a fintech product for the past several months, and I needed to track how the Iran war was affecting energy markets in real time. Specifically, I wanted to monitor the relationship between IRGC military statements, Strait of Hormuz shipping traffic, and Brent crude futures pricing. That kind of cross-domain, real-time analysis is exactly what Computer was designed for.

Perplexity Finance integrates over 40 live financial tools drawing from SEC/EDGAR filings, FactSet, S&P Global, Coinbase, LSEG, Quartr for earnings call transcripts, and even Polymarket for prediction market data. It recently added brokerage integration via Plaid. The SEC filing integration alone is useful. Understanding a company's risk exposure to Middle Eastern supply disruptions used to mean manually searching EDGAR, finding the relevant risk factor sections, and reading through dense legal language. Now you can ask Computer to pull the energy supply risk factors from every S&P 500 company with significant oil and gas exposure, and it returns a structured comparison.

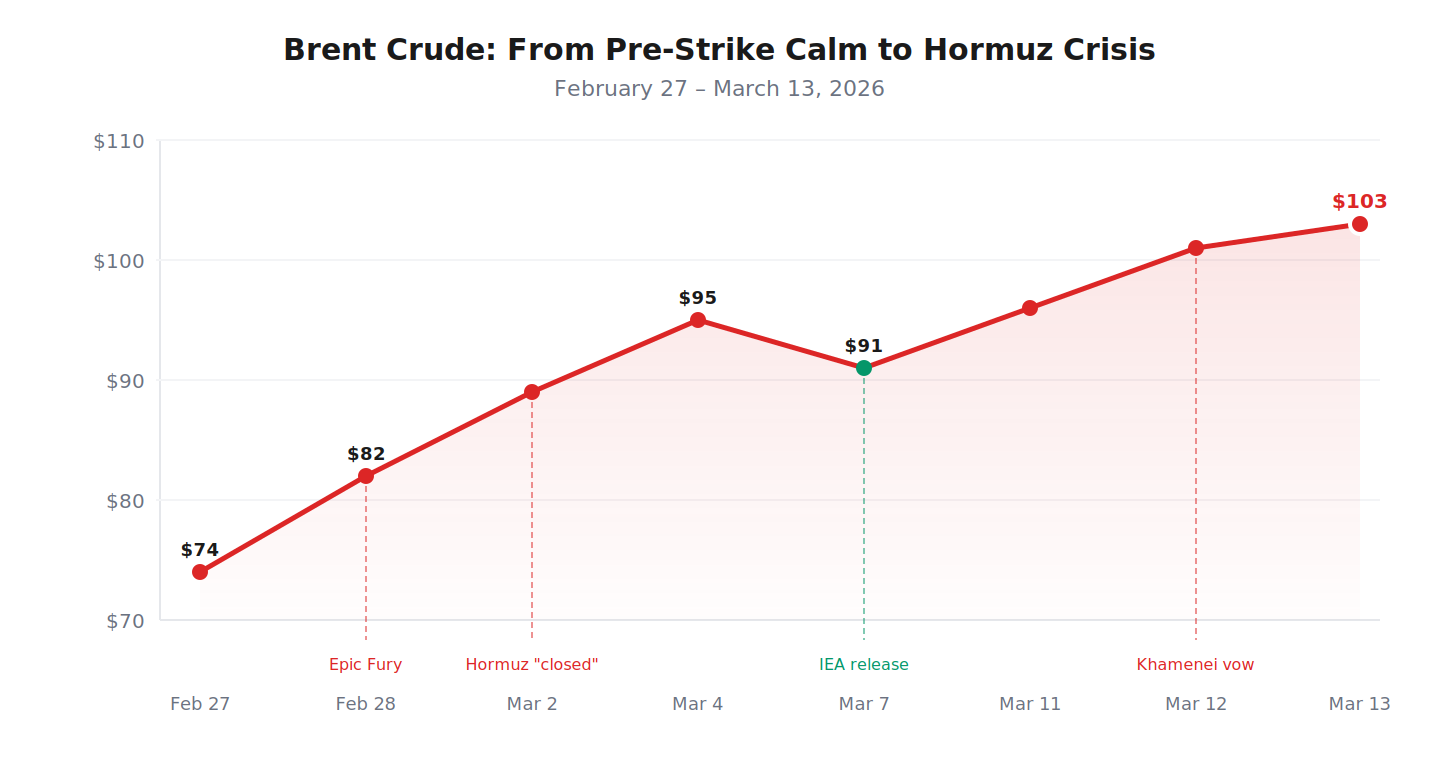

On the morning of February 28, when the U.S. and Israel launched Operation Epic Fury against Iranian military and nuclear facilities, I used Computer to set up a monitoring task: track Brent crude price movements, cross-reference every major news event and IRGC statement with the corresponding price reaction, and compile the results into a running timeline. It did this over the course of two weeks, asynchronously, pulling from S&P Global Platts, CNBC market data, and Reuters. Every data point came with source attribution I could verify.

| Date | Event | Brent Crude | Daily Change |

|---|---|---|---|

| Feb 27 | Pre-strike: markets pricing in risk | ~$74/bbl | +2.1% |

| Feb 28 | Operation Epic Fury launched | ~$82/bbl | +10.8% |

| Mar 2 | IRGC declares Hormuz "closed" | ~$89/bbl | +5.2% |

| Mar 4 | First tankers attacked in strait | ~$95/bbl | +4.1% |

| Mar 7 | IEA authorizes 400M barrel release | ~$91/bbl | -3.8% |

| Mar 11 | Thai cargo ship struck, 3 crew missing | ~$96/bbl | +3.2% |

| Mar 12 | Mojtaba Khamenei: Hormuz stays closed | ~$101/bbl | +8.0% |

| Mar 13 | Brent settles above $103 | $103.14/bbl | +1.9% |

Source: CNBC, Al Jazeera, S&P Global Platts

Brent crude surged 39% in two weeks as the Hormuz crisis unfolded. Source: CNBC, Al Jazeera, S&P Global Platts

This is the kind of workflow that exposes the orchestration advantage. A single-model agent cannot simultaneously do live financial data retrieval (requires specialized API integrations), geopolitical news synthesis (requires real-time web search with citation tracking), and multi-week asynchronous execution. Computer chains all three because it routes each capability to the model or data source best equipped for it. Neither OpenClaw nor Cowork has built-in access to 40+ institutional financial data sources. For anyone doing financial research without access to a Bloomberg terminal, this is currently the closest AI-powered alternative.

What Computer Cannot Do

The marketing makes Computer sound omnipotent. It is not. The limitations are real and worth understanding before paying $200/month.

First, it has no desktop control. It cannot see your screen, click buttons, or interact with local applications. OpenClaw can run shell commands, browse the web with a real browser, and automate anything on your machine. Cowork can read, write, and organize local files within a sandboxed folder, automate Chrome, and connect to growing libraries of desktop plugins. Computer runs entirely in the cloud. It cannot open your local files, interact with native apps, or automate your operating system. Perplexity announced "Personal Computer" at Ask 2026 (a Mac Mini-based system with local access) to address this gap, but it is waitlist-only.

Second, Computer had reliability issues at launch. TechCrunch reported that Perplexity canceled a live demo for press the day before the announcement because of flaws found hours before the event. In practice, I have seen occasional sub-agent failures where research tasks return incomplete results, or the orchestrator assigns a task to a model that is not ideal. The system is improving, but it is not yet reliable enough for workflows where errors carry real consequences.

Third, the $200/month price is steep. OpenClaw is free (you pay only API costs, typically $20-50/month for moderate usage). Cowork is available starting at $20/month on Claude Pro. You are paying a significant premium for multi-model orchestration, and whether that premium is justified depends entirely on how much time it saves relative to doing the routing manually.

Fourth, the copyright lawsuits from the New York Times, Dow Jones, and BBC represent existential risk. Perplexity's entire value proposition depends on accessing and synthesizing web content. The company has signed revenue-sharing agreements with over 300 publishers, but the biggest names are not on board and are actively litigating. If courts restrict Perplexity's ability to scrape and summarize, the product degrades.

What This Means for the Industry

Step back from the individual products and look at what happened at the industry level. In the span of about eight weeks, from late January to mid-March 2026, three radically different visions of AI agents entered the market. OpenClaw proved that an open-source, self-hosted agent could go viral and achieve adoption from hobbyists to Chinese government-backed enterprises. Claude Cowork proved that a single-model desktop agent could be polished enough to threaten incumbent software companies and their stock prices. Perplexity Computer proved that a multi-model cloud orchestrator could unify capabilities that previously required five different tools.

None of these products existed a year ago. The structural shift underneath the noise is this: AI is moving from "tools you talk to" to "systems that do work." Chatbots answer questions. Agents complete tasks. The interface is becoming less important than the execution layer, and the execution layer is where the competition is fiercest.

The question for anyone building in this space is not "which agent is best today." It is "which architecture compounds over time." OpenClaw's answer is that open-source communities compound. Anthropic's answer is that deep single-model integration compounds. Perplexity's answer is that orchestration compounds. All three could be right. The market is big enough.

Perplexity is also unique in its position because of its search infrastructure. The company indexes over 200 billion URLs with tens of thousands of updates per second. That retrieval layer makes Computer's outputs grounded and citable rather than hallucinated. The combination of multi-model orchestration with a massive real-time search index is what separates Computer from just writing your own orchestration scripts with LangChain. You could replicate the model routing, but you cannot replicate the search index.

The enterprise play is where the real money is. Computer for Enterprise puts the system inside Slack channels, adds Snowflake connectors, and layers enterprise governance on top. VentureBeat reports Perplexity has tens of thousands of enterprise customers with only six people on its enterprise go-to-market team. That ratio is only possible because the product sells itself through usage. Whether it can scale against Microsoft Copilot (which licenses Cowork's engine for M365 integration) and Salesforce's agent offerings is the open question.

Where This Goes

If I had to bet, I think we end up in a world where all three approaches coexist, serving different segments. OpenClaw and its descendants will own the developer, tinkerer, and privacy-first segment: the people who want maximum control and accept maximum responsibility. Cowork and its competitors (including Microsoft's Copilot Cowork) will own the enterprise desktop segment, where safety, sandboxing, and deep integration with existing file systems matter most. Computer and similar orchestration layers will own the research-heavy, multi-domain workflow segment, where no single model is sufficient and the ability to chain capabilities across providers is the differentiator.

The wildcard is what happens to the model providers. If OpenAI, Anthropic, and Google decide to restrict API access to protect their own agent products, Perplexity's orchestration layer loses its supply chain. Srinivas has said publicly that model providers would hurt themselves by doing this, since API revenue is a major business for all of them. He is probably right in the short term. In the long term, if agents become the primary way people interact with AI rather than direct model access, the incentives could shift.

The trust question looms large too. Perplexity is three years old and asking enterprise CISOs to route sensitive data through its platform. Anthropic is five years old and has a $380 billion valuation partly because enterprises trust it enough to deploy Cowork across their organizations. OpenClaw is technically not even a company anymore, since Steinberger joined OpenAI in February and handed the project to an open-source foundation. The institutional credibility gap between these products is significant, even if the technology is comparable.

Steve Jobs once said, "Musicians play their instruments. I play the orchestra." Srinivas quoted that line when he launched Computer, and it captures the thesis precisely. The individual instruments are excellent. They are getting more excellent every quarter. The bet is that the conductor adds enough value to justify a seat at the concert, not by being better at any single instrument, but by knowing which one to play and when.

Three weeks in, I think the orchestra sounds different from the soloists. Whether "different" translates to "better enough to pay $200/month for" is a question the market will answer. But the thesis that orchestration is a durable layer of value, not just a stopgap until one model rules them all, is more compelling than the skeptics give it credit for. Watch this space.

Sources: , , , , , , , , , , , , , , , , , ,